Back to Reality (almost)

March 9, 2011

by Christopher Gutteridge

We’re spending this week catching up on little jobs we put on hold to get data.southampton ready on time, but there’s people who keep offering me data!

Unilink Happy

The Unilink office have offered to take over looking after the bike shed location data, as they look after that service. That’s great! Our goal is for the ColinSourced data to be slowly handed over to parts of the university administration, with his dataset left to be the odds and ends which nobody is specifically responsible for. We’ve also been discussing how to advertise the bus-times related features to students and Unilink users. I don’t want to dive in with both feet for a week or two, in case there’s issues that’ve not come to light yet. I’m dead proud of the fact that their receptionist told her boss “yes, I can look after that data, it’s just a google spreadsheet, it’s easy!”. That’s our goal!

Muster Points

I got a great suggestion from Mike, our facilities manager, that we could add public safety information about buildings. We don’t need every detail of every fire escape (there’s signs in buildings for that), but we could usefully add a list of first aiders and a map polygon of the muster point (which we can render on the building page). We’ve created a mini dataset for the ECS buildings muster points, but I’ve not yet had time to import it into the site.

Google data a bit Shonky

My contact for university buildings data pointed out (rightly) that we had the incorrect location on the Highfield Site page for a few things. That’s not my data!! It’s the labels added by Google based on whatever they’ve found on the web. In this case the data would be accurate enough if planning how to drive to the Gallery, but useless if you are plotting a site map.

I fixed it by just using “t=k” instead of “t=h” which turns off the labels from Google.

Of course the best way to get better data into Google is to publish it on the web! Anybody got any advice on how to get Google to read the locations of our stuff?

How does it all work, then?

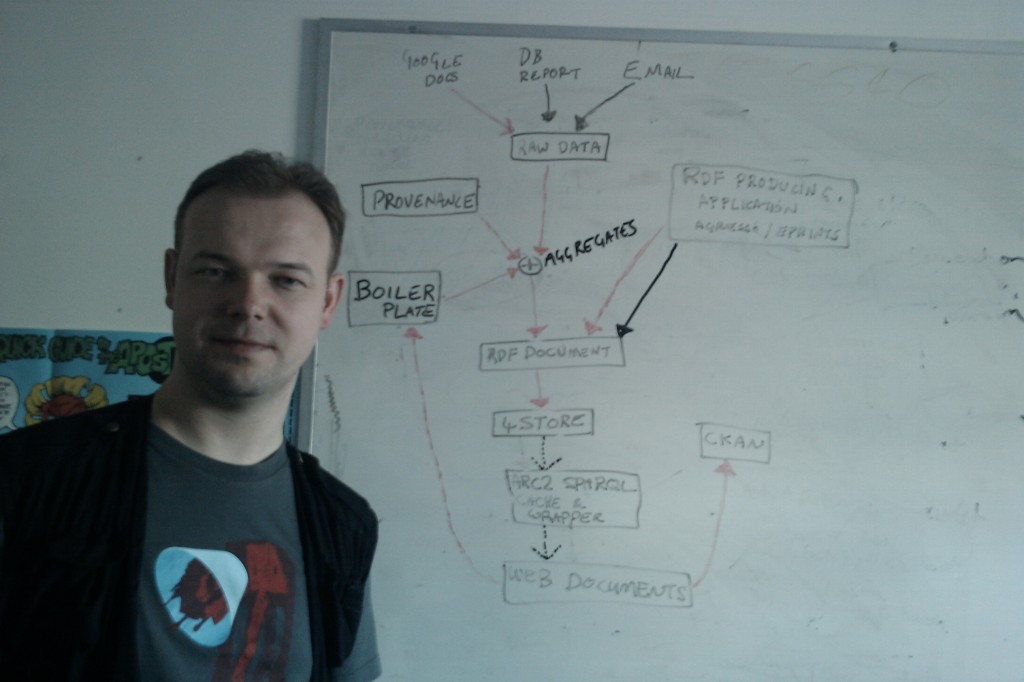

I’ll write on the Web Team Blog, sometime soon, an explanation of how the systems on the data site work, but for now here’s the diagram.

The first thing you’ll notice about this diagram is that I’ve had a hair cut. I really hated how I look in the last picture I posted here and was sick of trying to look after it. Fresh new look for Spring!

OK, the diagram has 3 colours of arrows but I couldn’t find 3 pens so the dotted arrows at the bottom are the 3rd colour. Doing more with less!

The black arrows represent manual processes like people emailing me data and me copying into the correct directory.

The red arrows are all the stuff which happen when I run “publish dataset” (warning Perl!).

The dotted lines are triggered when a request is made to a URL. It’s not very complicated and the script does most of the heavy lifting.

The long term goal is for something to download copies of spreadsheets (etc.) once an hour and MD5 them. If the checksum has changed since the last publication date, then it’ll republish automatically. That way I can leave it polling the spreadsheet describing the location of our cycle sheds, and if it ever changes it’ll republish it without bothering me. I work hard to get to be lazy later!

Dave Challis would like to point out that I’ve skipped all his clever stuff around the 4store and ARC section so he’ll write a post later to explain that in more detail.

Recognition Back Home

There’s been lots of tweets, but few blog posts about this site so far, but it’s nice to be mentioned on the hyper-local blog from my home town!

A quick celebration and back to work

March 8, 2011

by Christopher Gutteridge

The site has been open to the public for 12 hours and in that time has logged 125411 hits, but due to the nature of the design, asking for an HTML page can generate several secondary requests to the site for data from the server itself, so that number should probably be divided by 5 or 10. Yes, we use our own public data sources to build the HTML pages, not a ‘back channel’ to the data. Keeps us honest, and able to catch issues quickly.

We’ve had hits from about 1300 distinct IP addresses since noon.

At 5:30 we had a meet up of some of those who’ve been involved in some way. We had some rather nice champagne, kindly given to us to congratulate us by Chris Phillips at IBM. In true engineering style we drank it from coffee mugs, which is almost exactly the same way we celebrated the release of EPrints 2.0 almost a decade ago.

Minor Fixes

March 7, 2011

by Christopher Gutteridge

We’ve corrected a few 404 errors. Most notably the fact that the Excel spreadsheet in the payments dataset didn’t link properly.

We are also aware that the payments dataset contains some broken dates. We are using the RDF exported from the very new and experimental Open Data reporting tool for Agresso. We checked that data really hard to ensure it didn’t contain any information we didn’t have the right to publish, or was commercially sensitive, but totally missed a few 2011-13-13 dates! Unit 4, who make the software are working on a fix as we speak. This is a “beta” service, so you’ve got to accept a few hiccups.

The site has stood up today very well and we’ve got some great ideas on how to improve it. Watch this blog!

Launch Monday!

March 7, 2011

by Christopher Gutteridge

We’ve just crossed over to midnight and the site is looking pretty darn linked. We’ll open it to the public once the final bits have been checked tomorrow.

The data.southampton team have produced a few initial applications for the data, plus so have a few of our students, but this is just the start. I can’t wait to see what happens next.

This was lots of hard work trailblazing how to set the site up, but all our code is available! If you wanted to just make an RDF document describing buildings or a phonebook we’ve got some nice scripts which turn simple spreadsheets into linked data! I’ve just imported a sheet of recycling points from around the university, and that’s the last thing for today.

One thing we’ve not yet really done is shown how much goes on under the hood.

If you want to explore the data, swapping the .html in the address bar for .xml, .rdf and .json will often give some data. This data is all rather free-range right now, but we’ll progressively improve the site as we learn.

Nightclub Bus-times Idea

March 4, 2011

by Christopher Gutteridge

My colleague, Toby Hunt, had a cool idea for a use for the live bus-times data; put a screen in a pub or nightclub showing when the next buses from the nearby bus-stops are. More drinking time, and less time spent outside in the cold. For girls travelling home alone, waiting longer than needed at a bus-stop could even be a safety issue.

We are grabbing the data from the council website, and caching it for 30 seconds to try and minimise load on their server. There’s 1410 bus-stops in the bus-stops dataset so in theory the maximum load on their server would be those requested twice a minute. 47 requests per second is rather a lot, but I doubt it’ll ever see 10% of that.

Welcome to the Data Blog

March 4, 2011

by Christopher Gutteridge

Welcome to the University of Southampton Data Blog. We will use this blog to update you on new features of the data site, cool things people have done with the data and so forth.

The techie details about how the site works and the different technologies involved will be posted to our Web Team blog.

Right now we’re about 60 hours from Data-Day, which is March 7th.

After launch we’ll continue to add datasets. There are datasets we have approval to publish but there are just not enough hours in the day! Datasets which are planned to follow in due course include

- Building energy use data (we have a great metering system)

- What software is installed on workstation clusters and desktop machines of each academic unit

- Menus from the staff club

- Mapping from Programme of Study to individual modules

In the longer term we also hope to look into giving people the ability to access their personal data (timetables specifically), and more importantly authorise apps to have access to that data. See this blog post for some earlier thoughts on this concept.

The photgraphs of many of our main buildings were provided by a student, under a CC-BY license. This means anyone in the University, or beyond, can freely use them so long as they credit the artist. We don’t have photos of the more far flung or obscure buildings, but the student photographic society have taken that as a challenge and we look forward to seeing what they come up with.