Following our article first post https://blog.soton.ac.uk/mle/2013/12/17/blackboard-in-the-new-data-centre-what-does-it-mean-for-me/ looking at the direct impact to users following our migration to the new data centre we thought that our customers may also be interested in the benefits and features of the new data centre itself. We spoke to Mike Powell the Data Centre Manager to find out more.

Background

Data centres are not a new concept to the University, since at least 1975 the basement of a university building has been used as “temporary location” to house servers and IT infrastructure for the University. Located below the water table, the data centre has survived several floods and power failures. In 2006 an independent consultancy confirmed that the data centre had reached the end of its life, being out of capacity in terms of the space and energy available to it, and presented a risk to the core business of the University.

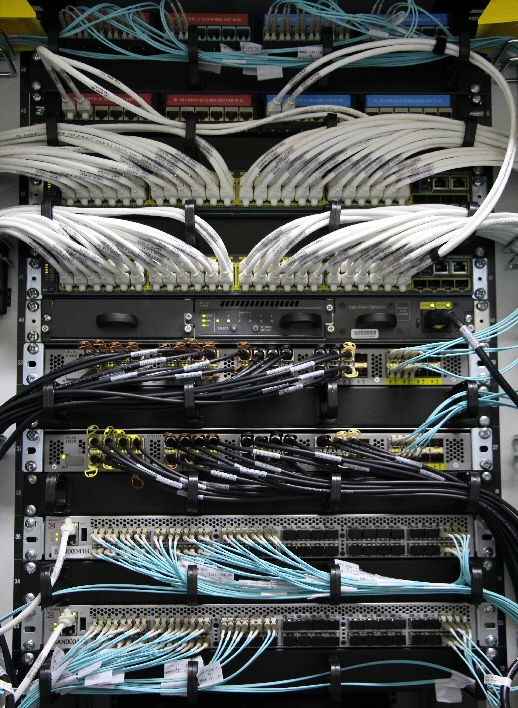

Having accepted the need for a new location the University first looked for a site on campus. However, the potential energy requirement, heat generated, and noise of the air conditioning would have had a negative effect on teaching and research in adjacent buildings. A further review by IBM recommended using an off-site location with a large warehouse as has become the norm for organisations of our size. Eight locations were considered and one was identified that had good match for the University’s requirements with particularly good fibre network provision and power availability offering a combination of both suitable land and surroundings and building envelope.

The university purchased this site in November 2011. Construction started in February 2012 and was handed over to the University in March 2013.

N+1

The new data centre is based on a “N+1” model for its mechanical and electrical support infrastructure. This means that a spare is available for every component, for example a spare cooler, a spare generator, a spare power supply. This means that any disruption caused by maintenance or failure is minimised as much as possible. The main aim of data centre architecture is to provide the highest availability of service possible to all members of the University. This reflects the reliance of the University on the services provided by iSolutions and the high impact on its members whenever those services are unavailable. To ensure the success of the University and its members such a model is essential. Almost every aspect of the University’s business from downloading a course handout from Blackboard to analysing a computational model in Iridis goes through the data centre.

Migration

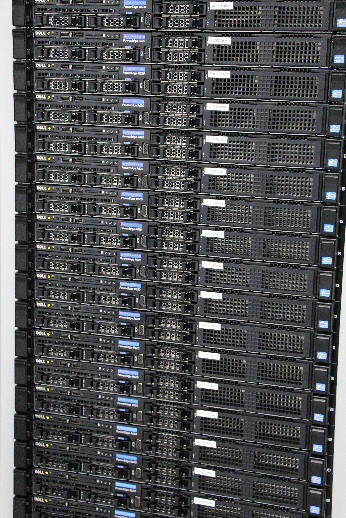

Right now iSolutions staff are hard at work migrating services to the new data centre aiming to have completed migration by March 2014 marking one year since the data centre was handed over the University. So far 56% of services have been migrated which means that when you use such services as Blackboard, Share Point 2010 and Planon you are already using services in the new data centre.

Environmental impact

Data centres require a lot of energy both to power the equipment and to keep it cool. The design of the new data centre is especially sensitive to the environment.

The new data centre has a “very good” BREEAM rating One of the most interesting aspects is the Hybrid Cooling system, also known as “free cooling”. When the air temperature outside of the data centre is below 21C rejected hot water from the server cabinets is moved to large radiators outside where energy efficient fans draw cold ambient air across the them. The water, having been cooled, is returned to the data centre to begin the process of cooling the servers once more. This is one of the most efficient ways to cool a data centre.

Most of the lighting employs LED lights. This ensures low energy consumption and a very long service life, meaning less maintenance in the long term.

The power conditioning equipment for the servers has been chosen for its high energy efficiency properties. While it was more expensive to purchase it will have paid for itself in terms of cost savings within three years.

Sustainability built into processes

Requests for Change (RFCs) which are part of our ITIL implementation now include a carbon impact entry for the installation and decommissioning of servers. Energy usage is closely monitored and calculated. By the time all services have been migrated to the new data centre energy usage will be significantly reduced.

The Data Centre Team

The data centre is staffed by a team skilled professionals from iSolutions. We asked each to tell us their favourite aspect of working in the Data Centre.

Mike Powell

I feel privileged to have been involved in the delivery of this new data centre for the university and take great pride in working in this new high technology environment, which plays a key role in supporting the university in conducting research and learning and teaching.

Jon Raney

The most impressive aspect of the new data centre is the modern mechanical and electrical infrastructure supporting it behind the scenes. Other universities who have visited have shown great interest in the progress we have made which is very encouraging.

Martin Beach

What’s so impressive about this data centre is that it has been purpose built to the University’s requirements using the latest best practices of the industry, quite a difference from our first data centre. This puts the University in a fantastic position to meet the technological challenges of the 21st Century.

Tony Gregan

The peacefulness of the new data centre’s remote location is a world away from Highfield Campus, allowing me to adopt a single minded determination to make the most out of every day.

Dom Malson

For me the most exhilarating aspect of the new data centre is walking in and being hit by the intense sound of the Iridis 4 high performance computer.

Jon Stephenson

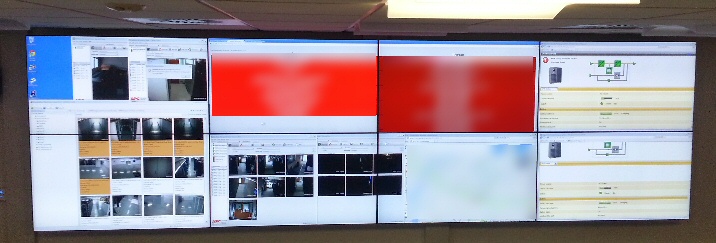

The new data centre’s state of the art monitoring systems allows us to keep an eye on almost every aspect of our IT infrastructure and take proactive action to ensure services run smoothly.