I recently attended the annual Institutional Web Managers Workshop (IWMW) conference, this year held at the University of Kent. If you are unfamiliar with it, IWMW self-describes as the premier event for the UK’s higher educational web management community. This marks my second IWMW – I attended last year’s conference in Liverpool and was sufficiently impressed that I was keen to attend again this year.

I made rather more of the networking this time around, speaking to people from all manner of different institutions and organisations. It’s fascinating learning what themes are common across the sector and what’s unique to the University of Southampton. Spoiler: surprisingly little is unique — most institutions are going through similar challenges.

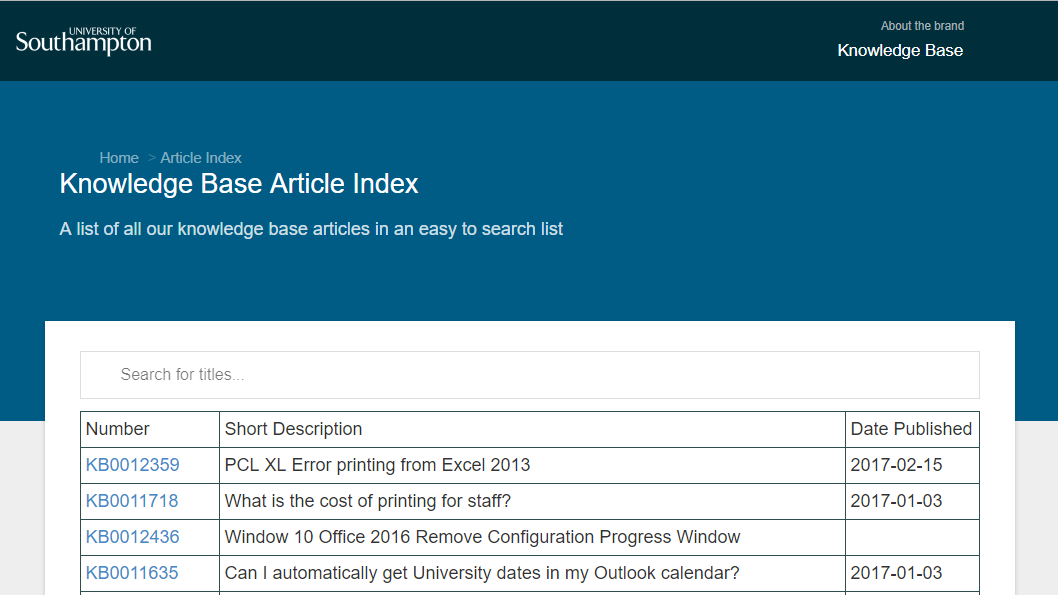

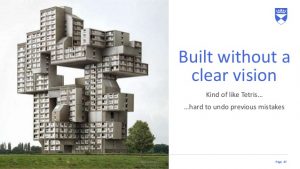

Andrew Millar from University of Dundee on how we build websites. With so many stakeholders, is it any surprise we get such complexity?

One early revelation came from talking with some of the delegates from Dundee University. They have a UX specialist whose role includes ethnographic study of people using their ICT services. I’ve always felt that this is an area where we should start heading. This particular viewpoint was solidified on the third day when Paul Boag said that not only should we be studying people as they use our services, we should be video-recording it and compiling a lowlights video. In essence, put together a 2-3 minute video of all the parts where your users are swearing at their computer in frustration! That way you end up distilling the biggest UX problems your sites have. The University of Bath team also talked about product vision and how finding the true north of your products encourages focus on people’s needs and using data to make decisions. Our services should be simple and intuitive and releases should be iterative and frequent.

The plenaries from both Bath and Greenwich both made the very good point that we should be stopping users from owning the design of their sites; they are content creators and we should be removing the distraction of presentation from them as much as possible. Business value comes from delivering content. Greenwich suggested that we should talk about content instead of pages to help distinguish the material from its presentation.

St. Andrews have run with this approach by publishing their Digital Pattern Library (DPL) to codify all aspects of their University’s brand and make the documentation and process accessible to all so that it’s as easy as possible for their staff to produce St. Andrews-branded websites.

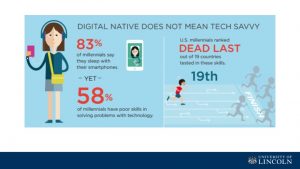

An insightful observation from Tom Wright, University of Lincoln: Digital native does not mean tech-savvy. Don’t assume that the younger generation are necessarily technology experts!

On a rather different tack, the University of Lincoln have made some astute observations about the current generation of undergraduates. It’s no secret that social media platforms, rich multimedia experiences and shared memes are a significant aspect of modern youth culture, but seemingly few organisations have sought to exploit that. Lincoln’s approach to marketing, by having current students create YouTube videos, is a nice touch and makes the experience much more engaging. I definitely recommend checking out their videos.

As well as the plenary talks, I also attended a workshop entitled How to Be a Productivity Ninja from Lee Garrett of Think Productive. Most of the IWMW workshops tend more towards the technical hard-skills end, but there are always one or two soft-skill management sessions and those are the ones I look out for. Claire Gibbons ran an excellent workshop at IWMW 2016 called Leadership 101 that I felt was the highlight of that conference. Productivity Ninja was this year’s equivalent for me; I learned a few great tricks to improve my productivity and have leads on some handy apps to help me organise things better. It was also nice to see one or two tricks mentioned that I already use.

In conclusion, I found attending IWMW 2017 a very worthwhile exercise and I am certainly looking forward to next year’s. I’m definitely keen to improve our UX testing with ethnographic studies and I’ll be investigating whether we can run a Productivity Ninja session at Southampton some time soon.