OpenIMAJ Performance 101: Feature Detection and Tracking

Look anywhere on the web and you’ll find loads of people complaining that Java is slow. Given that we’ve invested a lot of time in developing OpenIMAJ using Java, we want to demonstrate that this isn’t necessarily the case.

Look anywhere on the web and you’ll find loads of people complaining that Java is slow. Given that we’ve invested a lot of time in developing OpenIMAJ using Java, we want to demonstrate that this isn’t necessarily the case.

The speed of individual algorithms in OpenIMAJ has not been a major development focus, however some decisions have been made during implementation to ensure efficiency (for example making fields public rather than using getters and setters). In order to give the an idea of the real-world performance of our Java code, we have compared the time taken to process an image by our SIFT implementation to Lowe’s keypoint binary. For our test image, it takes 3.47 seconds to extract the features with Lowe’s binary (averaged over 100 tests). If we count the time taken using our implementation and include the time taken to start the JVM, then we get times of around 10 seconds. However, if we discount the time to start the JVM, the averaged time over 100 iterations is just 3.94 seconds, which is agreeably close to the native version.

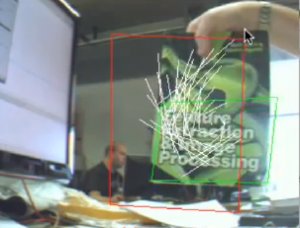

We’ve also made some demos to show that OpenIMAJ can be used for near real-time applications with a live video input. The video below shows realtime SIFT detection and matching. The detection and matching is being performed on every frame.

The next video, below, shows hybrid tracking approach in which we use SIFT extraction and matching to find a seed set of matches, and then use a Kanade-Lucus-Tomasi (KLT) feature tracker to track from that point on. If the confidence in the KLT tracking becomes too low, we automatically perform SIFT extraction and matching to re-initialise the tracker.

The code for the demos that are shown in the videos above can be found in the OpenIMAJ source in the demos/VideoSIFT directory. If you want to browse the implementation for the first demo, click here, and for the second click here.